Why Mid-Market Financial Institutions Struggle to Scale AI—and What It Will Take to Break Through

Over the past 18 months, the conversation around AI in financial services has shifted decisively. CEOs across regional banks and global institutions are increasingly focused on where AI will meaningfully change how work gets done—and what it will take to translate early activity into measurable business impact.

And yet, despite strong executive interest, progress inside most mid-market institutions tells a different story. There are pilots underway and no shortage of good ideas, but very little has scaled in a way that meaningfully changes how work gets done.

This gap between market urgency and operational reality continues to surface in our work with mid-market financial institutions and was a central theme of an executive roundtable we hosted in 2025, centered around AI in Financial Services: The challenge is not getting started with AI, it’s how to operationalize it.

Strong Executive Push, Limited Pull from the Business

One of the clearest patterns we see is top management out ahead of the organization. CEOs and leadership teams at global and super-regional financial institutions are actively pushing AI, putting it squarely on their teams’ agenda and creating top-down urgency. Boards are asking for concrete plans and timelines, and senior leaders are sponsoring development of use cases.

But inside the business, many front-line teams are still unclear how AI applies to their day-to-day work. Interest is uneven: some teams lean in, while others take a wait and see approach. Demand for AI solutions is often passive rather than self-initiated.

At one regional bank, leadership sponsored an enterprise-wide AI ideation effort. The result was dozens of submissions—but most were abstract (“use AI to improve underwriting”) rather than actionable (“summarize credit memos”).

What works better in these situations is more of a push-pull model:

Targeted sessions showcasing specific use cases (e.g., document summarization in credit review, exception handling in operations)

Structured intake to capture and refine ideas, coupled with proofs-of-concept and piloting

It’s the combination of exposure to practical AI use cases and translation into actual day-to-day workflows that begins to shift momentum.

Too Many Use Cases, Not Enough Throughput

Almost every institution we speak with has a growing backlog of AI ideas. The problem isn’t scarcity; it’s inability to process them and drive ROI.

We’re seeing organizations with 50-100+ use cases identified across business units with no consistent way to evaluate value or feasibility. Technology teams become the de facto gatekeepers, managing demand rather than enabling delivery. At one firm, the development team described their role as ‘triage’ – fielding inbound AI requests with no prioritization framework.

The firms making progress have introduced structured intake and prioritization, rapid proof-of-concept testing, and clear criteria for scaling versus stopping. These capabilities are the core building blocks of the ‘AI Factory’ concept featured at our 2025 roundtable—allowing organizations to test value quickly, scale what works, and stop what doesn’t.

Without a comprehensive approach like this, organizations stay stuck in pilot model with limited ability to scale.

A Small Set of Use Cases Drives Disproportionate Value

Despite long lists of possibilities, progress with AI adoption typically comes from a handful of practical, high-impact use cases.

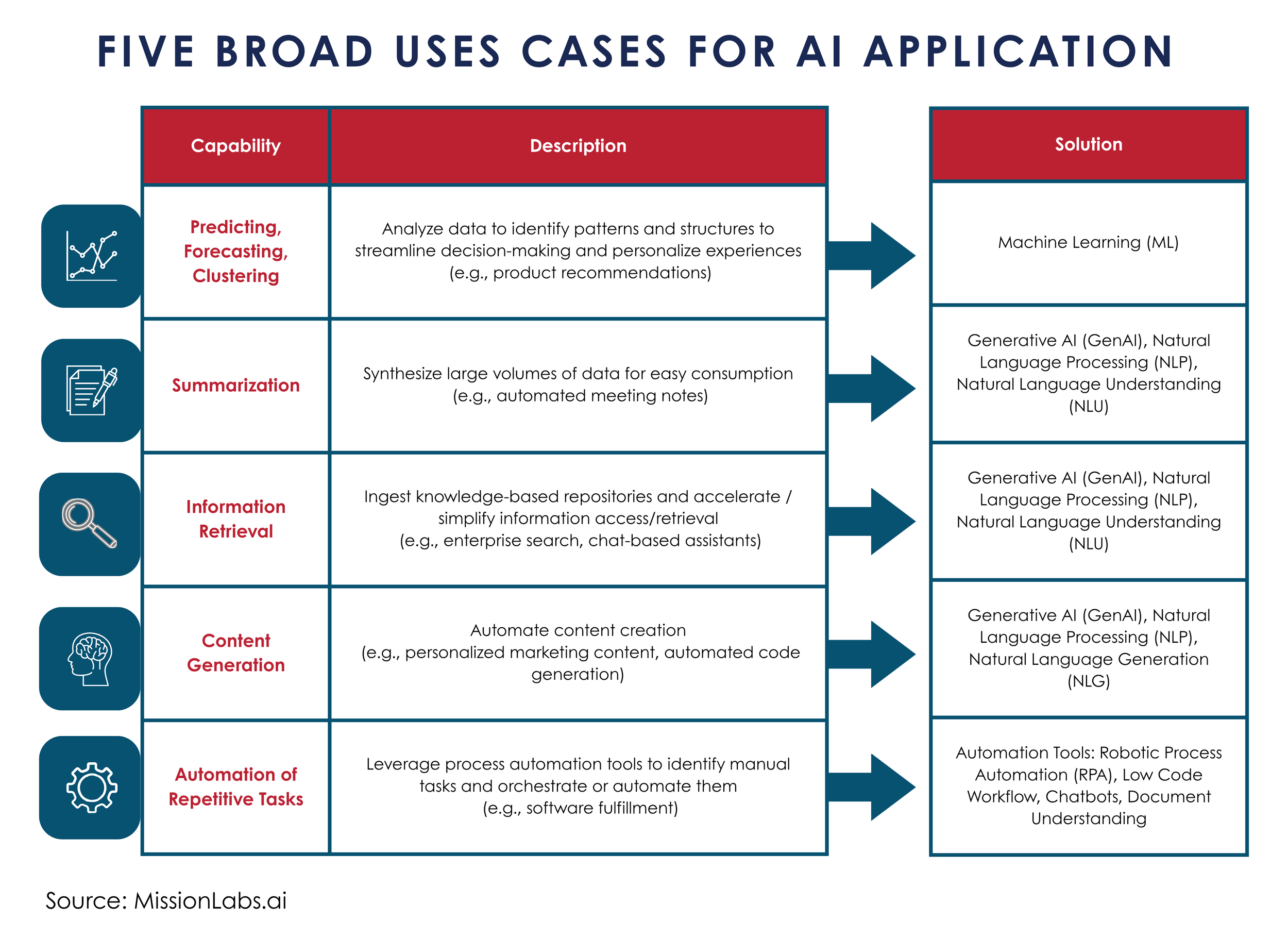

Across clients, we consistently see traction in areas where AI is already strong:

Summarization of complex documents (credit memos, investor reports, servicing notes)

Information retrieval across fragmented knowledge bases (e.g., querying policies, procedures, or deal data)

Document processing within high-volume workflows (e.g., extracting and validating loan or insurance data)

Predictive data analysis/forecasting to support decision-making

In one lending environment, streamlining document intake removed friction at a critical step in the process. In another, a knowledge assistant improved speed and accuracy in operations without requiring changes to core systems.

These use cases succeed because they reduce manual effort, improve data quality, create consistency, and generate capacity for higher-value work.

The lesson: rather than attempting to transform the business all at once, organizations should focus on building cumulative capacity and momentum.

Technology Is Available, but Is the Organization Ready?

In the course of our client work, most mid-market institutions are actively evaluating AI vendors and tools. At the same time, the market offers no shortage of options-- GenAI platforms, workflow automation tools, retrieval-augmented generation tools, and off-the-shelf copilots.

Yet we continuously encounter the same constraints:

· Multi-year IT roadmaps already overcommitted

· Limited bandwidth and AI-native skills to support experimentation

· Fragmented data environments that hinder model performance

In many cases, teams spend significant time reconciling and preparing data rather than deploying solutions, showing progress and reducing ROI.

It’s estimated that data fragmentation causes development teams to have to spend up to 70% more time manually reconciling, cleaning, and preparing data instead of deploying AI, bogging down projects and reducing overall ROI (Blend360, From Fragmented to Formidable: Building an AI-Ready Data Ecosystem, Astawa Alam, July 19, 2024).

Promising AI use cases often stall not because of model limitations, but because integration with core systems requires navigating multiple legacy platforms and approval processes.

Scaling AI therefore requires a more pragmatic approach: creating dedicated capacity rather than relying solely on existing teams, leveraging fit-for-purpose third-party tools where appropriate, and accepting that some solutions will initially operate alongside core systems rather than being fully integrated.

Risk and Governance: Engaged, But Not Yet Aligned

AI risk and governance is another area undergoing rapid evolution. Compared to even a year ago, risk functions are more actively engaged in AI discussions. That is a meaningful shift—but the frameworks themselves are still catching up.

Most institutions are comfortable with model risk, data privacy, and regulatory compliance because they are familiar domains. What is less developed is how to think about the business risks associated with AI-enabled decisioning—how outputs are used, how decisions are monitored, and where accountability sits.

As AI becomes embedded in operational workflows, this gap becomes more visible. The question is no longer just whether models are sound, but whether AI-informed are appropriately controlled.

In discussions with risk leaders, the central question is becoming: “How do we govern AI-enabled decisions, not just AI models?”

Institutions making the most progress are expanding their focus beyond traditional technology and cybersecurity risk to include AI decisioning, embedding risk earlier in the innovation and design process, and aligning controls with how AI is actually used in the business.

The Real Work: Rewiring the Operating Model

These challenges point to a broader conclusion: scaling AI is not primarily a technology issue—it’s an operating model and people issue.

From our vantage point, what it takes to scale AI is clear:

IT and DevOps expanding beyond traditional software delivery to support AI enablement and platform capabilities, while building additional capacity in parallel

HR/Talent addressing new skill requirements and creating space for teams to engage and experiment

Risk evolving from technology and data oversight to AI business risk management, with a greater role in co-design

Business units taking ownership of use cases, outcomes, and adoption.

This reflects a core theme from our roundtable: organizations only become “AI-ready” when operating model, governance, talent, and data are aligned and working together. A series of deliberate shifts over time—rather than a single transformation—will allow AI to become embedded into how work gets done.

What we’re seeing across mid-market financial institutions isn’t a lack of will or activity. It’s a lack of a consistent, repeatable process to turn that activity into impact and ROI.

Most organizations have moved past awareness and into experimentation but have not yet built the capabilities to scale AI.

The organizations that break through will not be the ones with the most experiments or sophisticated models, but those that: Create repeatable, structured pathways from idea to impact

· Create repeatable, structured pathways from idea to impact

· Focus on embedding practical, high-value applications into workflows

· Align business, technology, risk and talent

· Treat AI as an operating model shift, not just a technology initiative.

For organizations navigating how to move from AI experimentation to scaled impact, the question is not where to start—it is where the system is breaking down.

At Clarendon Partners, we work with financial institutions to translate AI ambition into executable use cases, align business, technology, and risk, and build the operating model capabilities required to scale AI responsibly.

If your organization is looking to move beyond pilots and begin scaling AI with confidence, we welcome the conversation. Get in touch with us today to learn more.